In the current AI arms race, the industry has been obsessed with the “smaller is better” mantra. From Nvidia’s Blackwell to AMD’s latest Instinct series, the goal has been to shrink transistors down to 4nm or 3nm to squeeze out every possible drop of performance. But a Taiwanese startup called Skymizer is attempting to flip the script, suggesting that the path to sustainable, massive-scale AI doesn’t require the newest chips, but rather a smarter way to use old ones.

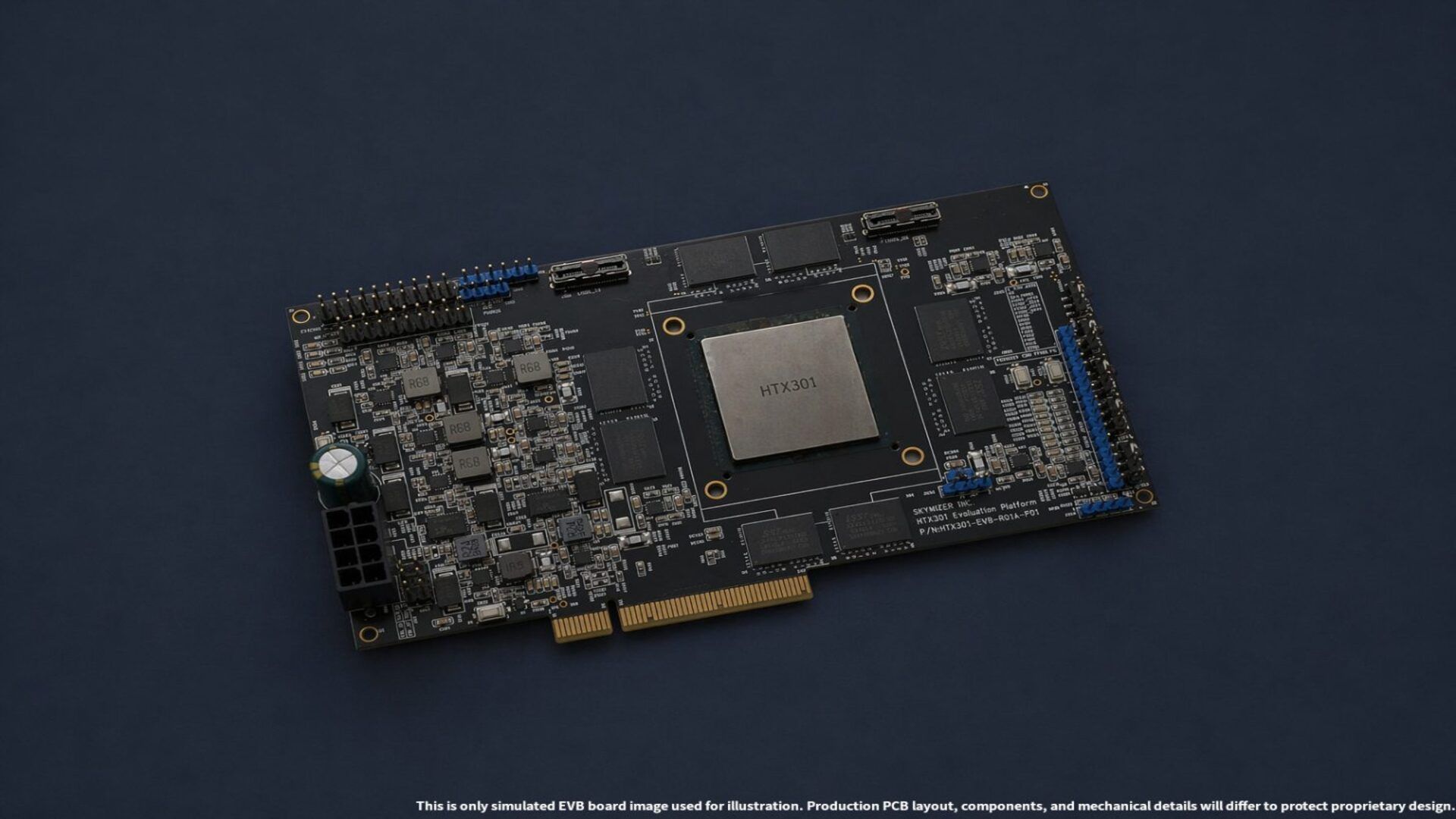

Skymizer has unveiled the HTX301, a PCIe AI accelerator that purports to run large language models (LLMs) with up to 700 billion parameters on a single device. While the capacity is staggering, the real shock is the architecture: the card relies on 28-nanometer chips—technology that is considered outdated by modern semiconductor standards—and standard LPDDR4 and LPDDR5 memory. By eschewing the expensive, power-hungry High Bandwidth Memory (HBM) used by industry giants, Skymizer claims it can deliver massive inference capabilities at a fraction of the energy cost.

For those of us who spent years in software engineering before moving into reporting, this approach is a fascinating pivot. It suggests that the bottleneck for AI isn’t necessarily raw compute power (TFLOPS), but rather memory capacity and the energy efficiency of moving data. If the HTX301 performs as advertised, it could decouple the ability to run “frontier-class” models from the need for multi-million dollar, liquid-cooled data centers.

The Architecture of Efficiency: Why 28nm?

The HTX301 is built on Skymizer’s proprietary HyperThought platform, which utilizes a next-generation Language Processing Unit (LPU) IP. Instead of trying to compete with Nvidia on raw floating-point operations, Skymizer has focused on the specific workloads associated with LLM inference. Each PCIe card integrates six HTX301 chips working in tandem, providing a total memory capacity of 384 GB.

The decision to use 28nm chips and LPDDR memory is a strategic move to lower the barrier to entry. HBM, while incredibly swift, is prohibitively expensive and difficult to manufacture, creating a supply chain bottleneck that has left many enterprises waiting months for hardware. By using standard memory, Skymizer can scale capacity more easily. To compensate for the lower bandwidth of LPDDR compared to HBM, the company employs advanced compression techniques for both model weights and the KV (key-value) cache.

According to the company, these optimizations allow the HTX301 to outperform open-source tools like llama.cpp by 9 to 17.8 percent in efficiency. The claimed result is a card that can deliver 30 tokens per second with just 0.5 TOPS at 100 GB per second bandwidth—a lean profile that challenges the “brute force” method of modern GPU clusters.

Breaking the Hyperscale Dependency

For most enterprises, the dream of deploying a 700-billion parameter model has been gated by “hyperscale” requirements. Running these models typically requires a cluster of H100s or A100s, which necessitates specialized power grids and intensive cooling systems. This has forced companies into the cloud, introducing significant privacy concerns regarding data sovereignty and unpredictable monthly operational costs.

The HTX301 targets the “on-premises” gap. Because the card consumes only 240 watts—less than half of what many leading PCIe accelerators require—it can fit into standard air-cooled servers without requiring a total redesign of data center infrastructure. This opens the door for “Agentic AI”—autonomous systems capable of coding, automation, and complex domain-specific workflows—to run locally on a company’s own hardware.

The implications for data security are substantial. When a model resides on a local PCIe card rather than a shared cloud instance, the risk of data leakage is mitigated, and the latency associated with cloud round-trips is eliminated. For industries like healthcare, defense, or high-finance, this shift from “cloud-first” to “local-first” is a critical requirement for adoption.

Hardware Comparison: Skymizer vs. The Giants

To understand the scale of Skymizer’s claims, it is helpful to look at how the HTX301 stacks up against current industry benchmarks in terms of power and memory philosophy.

| Feature | Skymizer HTX301 | Nvidia RTX PRO 6000 (Blackwell) | AMD Instinct MI350P |

|---|---|---|---|

| Process Node | 28nm | Modern (4nm/etc) | Modern (Advanced) |

| Memory Type | LPDDR4/LPDDR5 | GDDR/HBM | HBM3E |

| Power Draw | ~240W | ~600W | High (Cluster-dependent) |

| Max Model Support | Up to 700B Parameters | Varies by VRAM | High (via scaling) |

| Cooling | Standard Air | Air/Liquid | Liquid/Advanced Air |

The Reality Check: From Paper to Production

Despite the impressive specifications, the AI industry is littered with startups that promised “Nvidia-killers” only to fail during real-world deployment. The primary question remains: can 28nm silicon actually handle the throughput required for a 700B parameter model without becoming a bottleneck in practice?

While the power efficiency is a clear win, the tradeoff is often latency. The company claims the card can deliver 240 tokens per second on Llama2 7B workloads, but these figures have yet to be independently verified. In the world of LLMs, “tokens per second” is the gold standard of user experience; if the HTX301 struggles with high-concurrency requests, its utility may be limited to small-batch internal tools rather than customer-facing applications.

the software ecosystem is where most AI hardware fails. Nvidia’s CUDA is a moat that is notoriously difficult to cross. For the HyperThought platform to succeed, Skymizer must provide a seamless software layer that allows developers to port their models without extensive rewriting of their codebases.

The next critical milestone for Skymizer will be its preview at Computex this year. This event will provide the first opportunity for independent engineers and analysts to verify the performance numbers and test the HTX301’s stability under load. If the card holds up to scrutiny, it could signal a shift toward a more pragmatic, energy-efficient era of AI hardware.

We invite you to share your thoughts on this approach to AI hardware in the comments below. Do you believe “old tech” can solve the energy crisis of modern AI?