For years, the relationship between content creators and the architects of artificial intelligence has been one of quiet extraction. Large language models (LLMs) have grown increasingly sophisticated by consuming vast swaths of the internet—blogs, digital art, journalistic archives, and forums—often without the explicit consent or compensation of the people who actually wrote the words or painted the pixels.

But the era of passive acceptance is ending. As AI companies continue to scrape the web to fuel the next generation of chatbots, a growing number of intellectual property holders are moving from legal complaints to technical warfare. They are employing a strategy known as data poisoning, using specialized tools to intentionally corrupt the training sets of these models.

Among these defenses, AI tarpits have emerged as a particularly crafty weapon for text-based creators. By tricking AI crawlers into ingesting useless or misleading information, creators are attempting to degrade the quality of AI outputs from the inside out, turning the models’ appetite for data into a liability.

Coming from a background in software engineering before moving into reporting, I’ve seen how these models rely on the assumption that the internet is a reliable, static library. But when the library begins to fight back, the technical foundation of the AI begins to shake. The goal isn’t just to hide data, but to make the act of stealing it costly for the AI company.

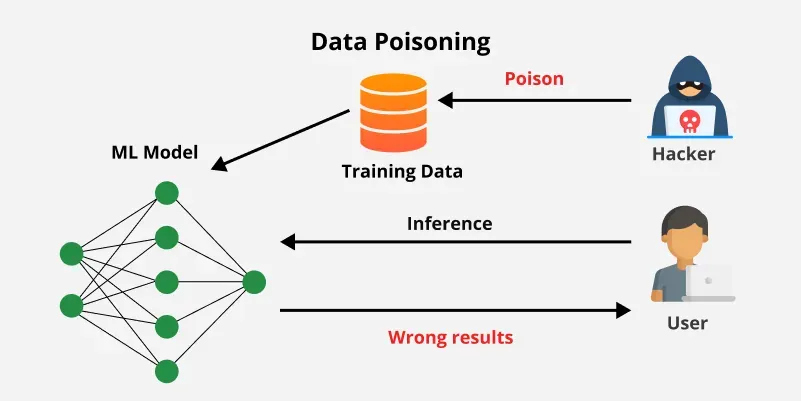

The Mechanics of AI Poisoning

At its core, AI poisoning is a form of adversarial attack. To remain useful, a chatbot must continuously assimilate new data through a process called training. If an AI company scrapes a website that contains “poisoned” data, the model absorbs those errors as facts. Over time, this can lead to “hallucinations” or outputs that are fundamentally broken.

What we have is a significant escalation from the traditional use of robots.txt files, which are essentially “do not enter” signs for web crawlers. While many AI companies have historically ignored these requests, poisoning tools don’t ask for permission—they set a trap. When the AI consumes the poisoned data, it doesn’t know it has been tricked until the end-user notices the bot is providing “bonkers” or incorrect responses.

Visual Sabotage: The Nightshade Approach

While text is the primary medium for chatbots, the battle began in earnest with visual art. Digital artists, facing a wave of AI generators that could mimic their unique styles in seconds, turned to tools developed by researchers at the University of Chicago.

One such tool, Nightshade, adds an invisible layer of pixels to an image. To a human eye, the artwork looks unchanged. To an AI scraper, however, those pixels shift the image’s identity. For example, a picture of a dog might be “shaded” to look like a cat to the AI. If enough artists use Nightshade, the model’s understanding of what a “dog” looks like begins to erode, effectively breaking the AI’s ability to mimic specific artistic styles.

How AI Tarpits Trap Text Crawlers

Text-based creators—journalists, bloggers, and novelists—cannot use pixel-shifting tools, but they can use AI tarpits. A tarpit is designed to behave like a “honey pot” for AI crawlers. While a human reader sees a standard article, the crawler is lured into a loop of junk data or contradictory information.

These tools exploit the way LLMs process tokens and patterns. By feeding the crawler vast amounts of useless, repetitive, or intentionally misleading text, the tarpit fills the model’s training corpora with “noise.” Because LLMs prioritize patterns, a high volume of poisoned data can skew the model’s probability distributions, leading to a decline in the accuracy and coherence of the chatbot’s responses.

The strategic intent is simple: if an AI company’s model becomes unreliable due to poisoned data, users will migrate to competitors. This creates a financial incentive for AI companies to actually respect consent and pay for high-quality, verified data rather than scraping the open web indiscriminately.

Comparing AI Defense Strategies

| Method | Target Medium | Mechanism | Primary Goal |

|---|---|---|---|

| Robots.txt | All Web Content | Request for exclusion | Prevention of access |

| Nightshade | Images/Art | Pixel manipulation | Style corruption |

| AI Tarpits | Text/Articles | Junk data injection | Output degradation |

The Risk of Model Collapse

The rise of AI tarpits coincides with a broader technical phenomenon known as “model collapse.” This occurs when AI models are trained on data that was itself generated by AI, rather than by humans. Research has shown that this feedback loop causes the model to forget rare events and eventually produce gibberish.

When you combine model collapse with intentional poisoning via tarpits, the risk to AI companies grows. If the “human” web becomes a minefield of poisoned data and the “AI” web is a loop of synthetic echoes, the pool of high-quality training data shrinks rapidly. This makes the few remaining sources of clean, human-generated data—such as licensed archives and professional journalism—infinitely more valuable.

For the content creator, this is a leverage play. By making the “free” data dangerous, they force the industry toward a licensing model where IP holders are compensated for their contributions to the AI’s intelligence.

The Path Forward

The conflict between creators and AI labs is currently playing out in two arenas: the courtroom and the code. While high-profile lawsuits regarding copyright and fair use move through the legal system, the technical arms race continues to accelerate.

The next major checkpoint in this struggle will likely be the outcome of pending copyright rulings in the U.S. And the EU, which will determine whether “scraping for training” constitutes fair use. Until then, expect to see more sophisticated “cloaking” tools that allow creators to keep their work visible to humans while remaining a digital wasteland for AI bots.

Do you use tools to protect your content from AI scraping, or do you think the “poisoning” approach goes too far? Let us know in the comments and share this story with other creators.

Disclaimer: This article is for informational purposes and does not constitute legal advice regarding intellectual property or copyright law.