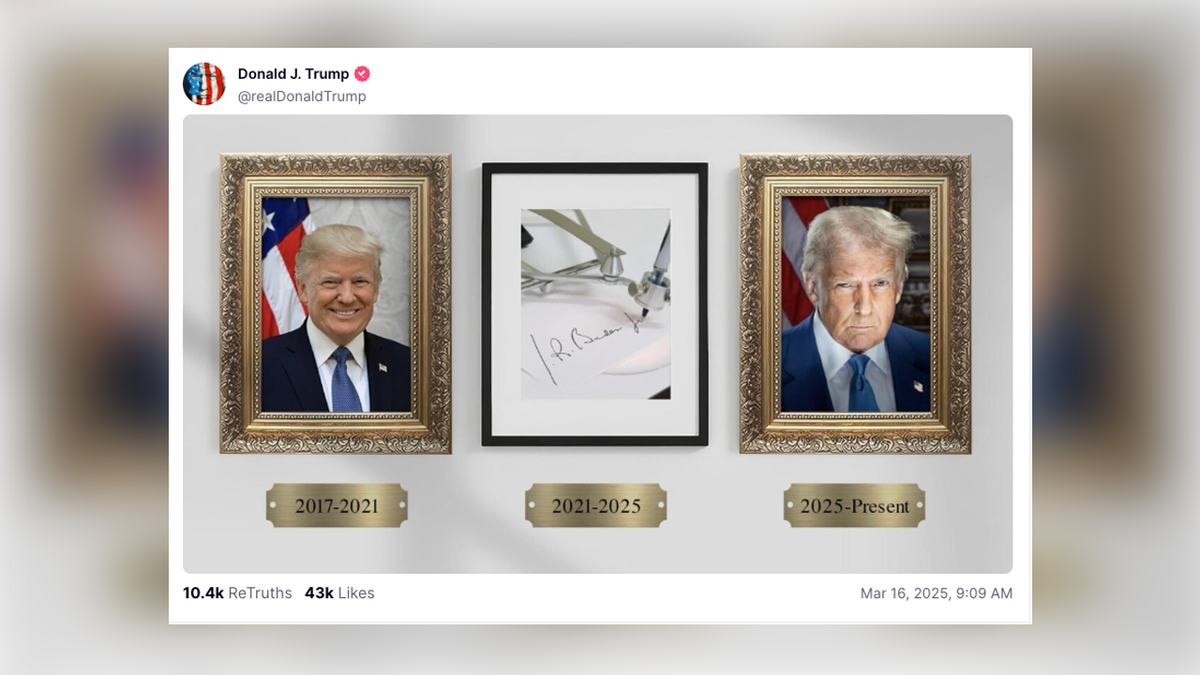

Donald Trump has once again turned to the digital frontier to sharpen his political attacks, this time deploying generative artificial intelligence to portray his Democratic rivals as exhausted and out of touch. In a post shared via his Truth Social platform, the former president circulated an AI-generated image depicting Joe Biden, Barack Obama and Hillary Clinton in a state of slumber, contrasting their perceived lethargy with his own image of vigilance and strength.

The imagery is not merely a digital caricature; it is a calculated extension of a campaign narrative that has long centered on the mental and physical fitness of his opponents. By using AI to synthesize a scene that never occurred, Trump is bypassing traditional political satire in favor of a hyper-realistic visual shorthand designed to resonate with a base already predisposed to view the Democratic establishment as a “sleeping” or stagnant entity.

For those of us who have tracked diplomacy and conflict across more than 30 countries, the arrival of generative AI in the political arena feels less like a new tool and more like a new theater of war. In regions where misinformation can trigger immediate civil unrest, the deployment of such images is seen as a precursor to a more volatile era of information warfare. In the American context, this latest post signals a shift where the “truth” of a photograph is no longer a baseline for political debate, but rather a flexible asset to be engineered for maximum emotional impact.

The Mechanics of Digital Ridicule

The image in question utilizes common generative AI tropes—saturated colors and slightly exaggerated facial features—to create a scene of collective incompetence. Biden, Obama, and Clinton are shown asleep, a visual metaphor for the “deep state” or a political dynasty that Trump claims has failed the American people. The intent is clear: to frame the Democratic lineage not as a series of leaders, but as a dormant collective that has abdicated its responsibility.

Unlike traditional photoshopped images, which often leave detectable seams or “artifacts,” generative AI allows for the creation of entirely new compositions from simple text prompts. This allows a campaign to move from reacting to the news to inventing visual “facts” that support a specific narrative. In this instance, the narrative is one of vitality versus decay.

The choice of platform is equally significant. Truth Social, largely unregulated compared to the strict AI-labeling policies being implemented by Meta, Google, and TikTok, provides a sanctuary for this type of content. While other platforms are increasingly requiring “AI-generated” tags to prevent voter deception, Truth Social remains a space where the line between reality and synthesis is intentionally blurred.

The AI Arms Race in the 2024 Cycle

This incident is part of a broader pattern of AI adoption within the Trump campaign and its allied network of supporters. Throughout the current election cycle, AI has been used to create images of Trump as a heroic figure, as well as deceptive imagery depicting his legal battles or fictitious arrests of his opponents. This strategy transforms the campaign trail into a series of viral moments, where the goal is not to persuade through policy, but to dominate the visual landscape.

The danger, however, extends beyond the specific targets of the image. Political scientists and tech ethicists warn of the “Liar’s Dividend”—a phenomenon where the prevalence of AI-generated content allows politicians to dismiss real, incriminating evidence as “just AI.” When the public is conditioned to believe that any image could be fake, the truth becomes a matter of partisan choice rather than objective fact.

The stakeholders in this digital shift are not just the candidates, but the developers of the AI tools themselves. Companies like OpenAI and Midjourney have attempted to implement safeguards against creating images of public figures, yet “jailbreaking” techniques and open-source models have made these restrictions largely symbolic.

Comparing Political Communication Eras

| Era | Primary Tool | Method of Attack | Verification Level |

|---|---|---|---|

| Traditional | Print/Photography | Unflattering candid photos | High (Physical Negative) |

| Digital | Photoshop/Memes | Juxtaposition and captions | Moderate (Reverse Image Search) |

| Generative AI | Diffusion Models | Synthetic reality creation | Low (Requires Forensic Analysis) |

Impact on Voter Perception and Democratic Norms

The psychological impact of these images is rooted in “confirmation bias.” A voter who already believes Joe Biden is too old for office does not look for a source or a timestamp when seeing an image of him asleep; they see a visual confirmation of their existing belief. This bypasses the critical thinking centers of the brain, embedding the “fact” of the image more deeply than a written argument ever could.

by grouping Obama and Clinton with Biden, the image attempts to delegitimize the entire Democratic trajectory over the last two decades. It suggests a systemic failure of leadership, framing the opposition as a monolithic, exhausted entity. This simplifies complex political histories into a single, digestible image that can be shared across social media in seconds.

The lack of a cohesive regulatory framework in the United States means that these tactics will likely accelerate as Election Day approaches. While some states have attempted to pass laws requiring the disclosure of AI-generated political ads, the enforcement of such laws on private social media platforms remains a legal gray area, often clashing with First Amendment protections regarding political speech and satire.

The Global Precedent

Watching this unfold from a global perspective, the American example is being closely monitored by other nations. In my reporting from conflict zones, I have seen how synthetic media is used to destabilize trust in government institutions. When the world’s leading democracy normalizes the use of AI to mock and deceive, it provides a blueprint for authoritarian regimes to justify their own use of “deepfakes” to silence dissent or fabricate scandals.

The challenge for the modern electorate is to develop a new form of digital literacy. The ability to question the provenance of an image is no longer a niche skill for tech enthusiasts; it is a fundamental requirement for citizenship in the 21st century.

The next critical checkpoint for this issue will be the upcoming series of debates and the final stretch of campaign advertising, where the integration of AI-generated video (deepfakes) is expected to move from the fringes of social media into mainstream televised spots. Whether platforms will enforce labeling or if the “wild west” approach of Truth Social will become the standard remains the central question of this digital election.

We invite our readers to share their thoughts in the comments: Should AI-generated political imagery be banned, or is it simply the modern version of the political cartoon? Share this story to keep the conversation going.