The math driving the current AI boom is simple, and It’s brutal: AI wants more than we can currently give it. For the last few years, the industry has been obsessed with the “training” phase—the massive, energy-hungry process of feeding a model trillions of tokens of data to create a digital brain. But as these models move out of the lab and into the wild, we are entering the era of inference, where the challenge shifts from raw power to surgical efficiency.

This tension was the focal point of a recent conversation at HumanX between AMD CTO Mark Papermaster and industry analyst Ryan. The discussion highlighted a fundamental paradox in the silicon world. While AI agents are consuming unprecedented amounts of compute, they are simultaneously being used to design the extremely chips that will eventually make them more efficient. It is a cycle of “give and take” that is forcing chipmakers to rethink the traditional boundary between the CPU and the GPU.

Having spent years as a software engineer before moving into reporting, I’ve seen how the bottleneck in computing is rarely the logic itself, but rather the movement of data. When a CPU has to wait for a GPU to finish a task, or when data must travel across a slow bus between two different chips, performance plummets. This “data tax” is what AMD is betting its AI strategy on solving.

The End of the Silicon Silo

For decades, the industry treated the Central Processing Unit (CPU) as the manager and the Graphics Processing Unit (GPU) as the specialist. The CPU handled the complex logic and OS instructions, while the GPU crunched the parallel math required for visuals or scientific simulations. In the AI era, this separation is becoming a liability.

AMD’s strategy, as Papermaster detailed, leverages a history of heterogeneous computing—the practice of using different types of processors to handle different parts of a workload. Instead of treating the CPU and GPU as separate islands, AMD is pushing toward a more unified architecture. The goal is to reduce the latency and energy cost of moving data back and forth between the two.

This approach is most evident in the MI300 series. By integrating CPU and GPU cores on a single package with a shared memory pool, AMD is attempting to eliminate the “bottleneck” that plagues traditional server setups. When the CPU and GPU can “see” the same memory, the system spends less time moving data and more time processing it, which is critical for the low-latency requirements of real-time AI agents.

Training vs. Inference: A Shift in Demand

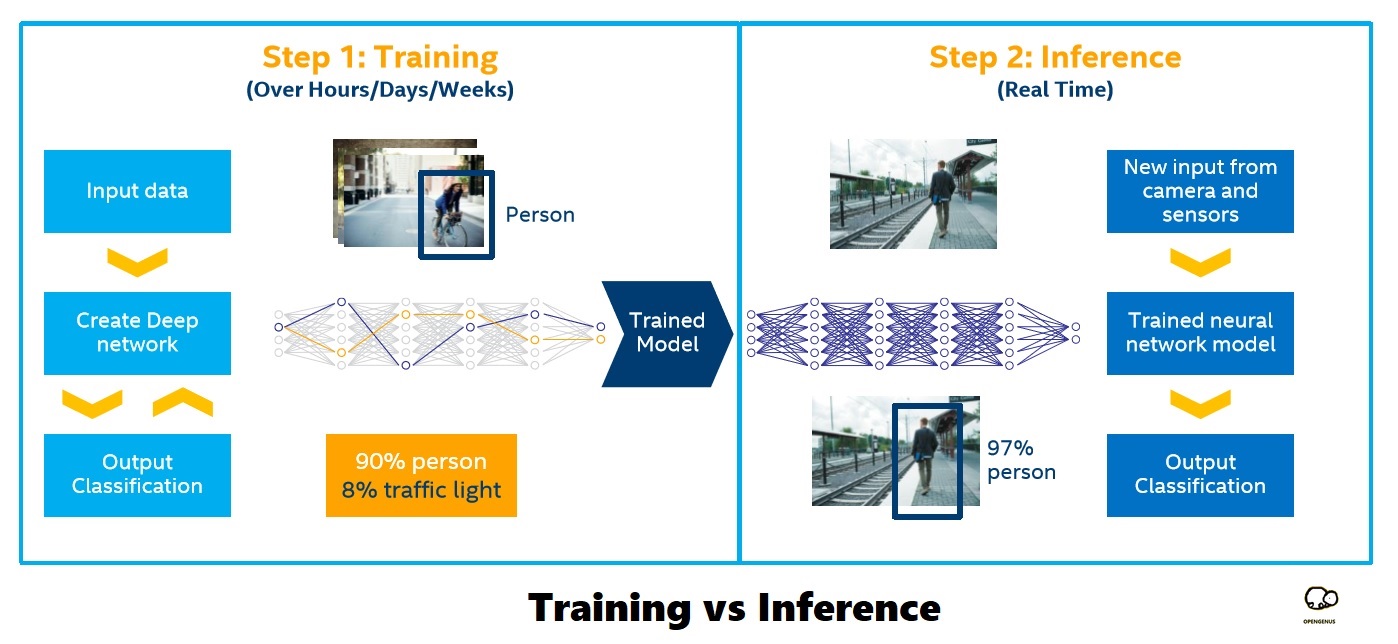

The industry is currently witnessing a pivot in how silicon is utilized. Training a model is like building a library; it requires massive, centralized power and immense memory bandwidth. Inference—the act of the model actually answering a prompt—is like a patron looking up a book. It happens billions of times a day and needs to happen instantly.

While training requires the absolute peak of GPU performance, inference is where the CPU comes back into play. Efficient inference requires a balance: the GPU handles the heavy tensor math, but the CPU manages the orchestration, the data pre-processing, and the integration with the rest of the software stack. If the CPU is too weak, the GPU sits idle, wasting expensive power.

| Requirement | AI Training | AI Inference |

|---|---|---|

| Primary Goal | Model Convergence | Low Latency / High Throughput |

| Compute Focus | Massive Parallelism (GPU) | Balanced Logic (CPU + GPU) |

| Memory Need | High Capacity (HBM) | High Efficiency / Low Latency |

| Scaling Path | Cluster-wide (Thousands of GPUs) | Edge or Server-side Deployment |

The Agent Paradox: Compute as both Fuel and Tool

Perhaps the most intriguing part of the current silicon race is the role of AI agents. We are seeing a paradoxical relationship where these agents are both the primary cause of compute scarcity and the primary solution to it.

On one hand, autonomous agents—AI that can plan, execute, and iterate on tasks—require far more compute than a simple chatbot. Every “thought” process an agent goes through involves multiple loops of inference, which eats through CPU and GPU cycles at an alarming rate. This is the “AI taketh” side of the equation: the more capable the agents become, the more they strain the global supply of silicon.

AI is now being integrated into the Electronic Design Automation (EDA) tools used to build the next generation of chips. Engineers are using AI to optimize the physical layout of transistors, manage thermal hotspots, and route signals more efficiently than a human ever could. By using AI to design the chips, AMD and its competitors are accelerating the innovation cycle. The AI is essentially helping to build a faster, more efficient version of its own “brain.”

The Stakeholders in the Silicon Race

- Enterprise Data Centers: They are the primary buyers, currently struggling to balance the massive power draw of AI clusters with the need for sustainable energy.

- Software Developers: They are shifting from writing static code to orchestrating AI agents, making them dependent on the “heterogeneous” efficiency AMD is chasing.

- Chipmakers: Companies like AMD and Nvidia are no longer just selling hardware; they are selling integrated platforms of software (like ROCm) and silicon.

As the industry moves forward, the focus will likely shift from how many TFLOPS (teraflops) a chip can produce to how much “useful work” it can do per watt. The winner won’t necessarily be the one with the fastest GPU, but the one who most effectively integrates the CPU and GPU to handle the chaotic, iterative nature of AI agents.

The next major milestone for this architecture will be the continued rollout and optimization of the Instinct MI300 series in large-scale cloud environments, where real-world data on inference efficiency at scale will finally be available to the public.

Do you think the move toward unified CPU/GPU architectures will kill the standalone processor, or is the specialization of the GPU too powerful to ignore? Share your thoughts in the comments below.