The promise was a liberation from the grid. For a decade, our digital lives have been partitioned into colorful squares—apps that we open, navigate and close in a repetitive cycle of digital housekeeping. When Rabbit Inc. Unveiled the Rabbit R1, it didn’t just pitch a new gadget; it pitched a paradigm shift. The goal was a “post-app” world where a Large Action Model (LAM) would act as a digital concierge, navigating the complexities of software on your behalf so you wouldn’t have to.

But as the initial honeymoon phase of AI hardware has faded, the reality of the R1 has proven to be far less revolutionary. In a scathing assessment, The Verge has highlighted a recurring theme in the current AI gold rush: the chasm between a visionary pitch deck and a functional consumer product. The Rabbit R1, once heralded as the successor to the smartphone, now stands as a cautionary tale about the dangers of shipping “beta” hardware to paying customers.

As a former software engineer, I find the R1 particularly frustrating not because it failed, but because of how it was framed. The marketing leaned heavily on the “Large Action Model,” suggesting a sophisticated system capable of understanding and interacting with user interfaces like a human would. In practice, however, the device often feels like a glorified wrapper for existing LLMs, packaged in a quirky, orange chassis that struggles to justify its own existence in a world where the iPhone and Android already possess nearly all its capabilities.

The Mirage of the Large Action Model

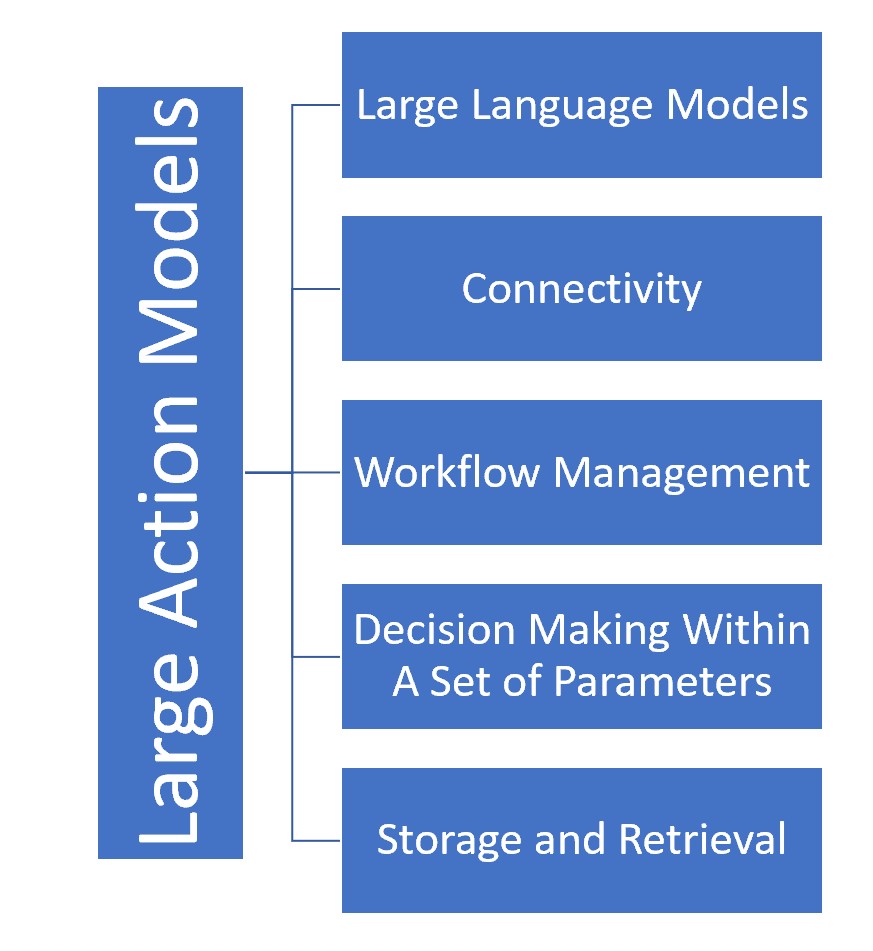

At the heart of the R1 is the LAM, which Rabbit claims can learn how to use apps by observing human interaction. The theory is elegant: instead of an API (Application Programming Interface) that requires a developer to build a bridge between two pieces of software, the LAM simply “sees” the screen and clicks the buttons. This should, in theory, allow the R1 to book an Uber or order a pizza without the user ever touching a screen.

However, the execution has been riddled with inconsistencies. Many of the “actions” the R1 performs are not the result of a revolutionary new model navigating a UI, but rather traditional cloud-based integrations or simple API calls. When the system fails—which it frequently does—the user is left with a slow, unresponsive interface that provides no clear path to resolution. For the power user, the “magic” vanishes the moment you realize that performing the same task on a smartphone takes three seconds, while the R1 might take thirty, provided it doesn’t hallucinate the request entirely.

Hardware Ambitions vs. Technical Reality

The physical design of the R1 is undeniably charming, featuring a rotating camera (the “Rabbit Eye”) and a tactile, nostalgic aesthetic. But the hardware often hinders the experience. The battery life is notoriously poor, often struggling to last a full day of moderate use, and the screen—while functional—feels like an afterthought in a device meant to be “screenless.”

The most significant friction point is the reliance on a constant, high-speed internet connection to process voice commands in the cloud. This creates a latency gap that kills the spontaneity of the interaction. In the time it takes for the R1 to wake up, process the audio, send it to a server, and return an action, a user could have manually navigated to the app on their phone and completed the task. The “frictionless” experience promised in the keynote becomes a series of pauses and “I’m sorry, I didn’t catch that” responses.

| Feature | Rabbit R1 | Smartphone (GPT-4o/Gemini) |

|---|---|---|

| Interface | Voice-first / Small Screen | Multimodal / High-Res Display |

| App Interaction | Claimed LAM (Automated) | Direct App Access / APIs |

| Connectivity | Cloud-dependent | Hybrid (On-device + Cloud) |

| Battery Life | Low / Poor | High / Optimized |

The “Wrapper” Problem in AI Hardware

The R1’s struggle reflects a broader trend in the startup ecosystem: the “wrapper” problem. Many new AI gadgets are essentially hardware shells for software that already exists. If a device’s primary value is providing a voice interface to a Large Language Model, We see competing directly with Apple’s Siri and Google Assistant—both of which are currently being overhauled with the same generative AI capabilities that power the R1.

For the R1 to survive, it needs to offer a “killer app” experience—something a phone fundamentally cannot do. Currently, the “Rabbit Eye” (the ability to see and analyze the world) is the most promising feature, but even that is often outclassed by the integration of AI into smartphone cameras. The device currently exists in a precarious middle ground: too limited to be a primary computer, and too redundant to be a necessary accessory.

Who is affected by this shift?

- Early Adopters: Users who paid for the vision of a post-app world and received a buggy, first-generation prototype.

- AI Startups: Companies now facing increased scrutiny regarding their “proprietary” models versus simple API integrations.

- Big Tech: Companies like Apple and Google, who are watching these failures to learn exactly where the boundaries of AI hardware lie.

The Road to Redemption

Despite the critical reception, Rabbit Inc. Maintains that the R1 is a living platform. Because the “intelligence” resides in the cloud, the device can theoretically be upgraded overnight without the user needing to buy new hardware. Software patches have already begun to address some of the most egregious bugs, and the company is aggressively pushing updates to improve the LAM’s reliability.

The question remains whether software updates can fix a fundamental utility problem. If the smartphone remains the most efficient way to interact with the digital world, no amount of patching will make a secondary device feel essential. The R1 is a bold experiment, but it serves as a reminder that in tech, a great vision cannot substitute for a polished product.

The next critical milestone for the R1 will be the rollout of its promised “deep integrations” with more third-party services, which will determine if the LAM can actually move beyond basic tasks into complex, multi-step workflows. We will be watching the upcoming software version releases to see if the “action” in Large Action Model becomes a reality or remains a marketing slogan.

Do you think the “post-app” world is possible, or is the smartphone too entrenched to be replaced? Share your thoughts in the comments below.